Context Matters

John Frizelle

Ready to get Started?

Transform your teams productivity with Alludium AI

When people first start using large language models, one detail quickly becomes impossible to ignore: they can only work with a limited amount of information at once.

That limit is called the context window.

If you are new to this space, it helps to think of a context window as the model's working set for the current moment. It is everything the model can actively "see" while generating its next response. That usually includes the system instructions, the current user message, recent conversation history, tool outputs, retrieved documents, and any other text we decide to place in front of the model.

That sounds simple enough. In practice, it is one of the hardest engineering problems in AI systems.

What is a token?

Before we talk about context windows, we need one more piece of vocabulary: tokens.

AI models do not read text the way humans do. They process text in smaller chunks called tokens. A token might be a short word, part of a longer word, punctuation, whitespace, or a small fragment of code. So when people say a model has a context window of a certain size, they usually mean it can accept up to a certain number of tokens, not characters or words.

That matters because token counts add up faster than most people expect.

What happens when the context window fills up?

When the context window gets full, something has to give.

Sometimes the failure is obvious. A request may error because the combined input is too large. But often the problem is quieter than that. The system starts dropping parts of the history, trimming tool outputs, or replacing details with summaries. The model still responds, but now it may be working from an incomplete picture.

This is where people start seeing familiar symptoms:

The model forgets an earlier decision

It repeats work that was already done

It misses an important constraint

It answers confidently, but without key background

Latency and cost increase because too much text is being sent over and over

In other words, the issue is not just "can the request fit?" It is "can the model still reason well with what remains?"

Bigger windows help, but they do not remove the problem

It is tempting to assume that larger context windows solve all of this. They absolutely help. A model that can handle more information at once is easier to work with, especially for longer conversations and more capable tools.

But bigger is not the same as unlimited, and it is not the same as perfect recall.

Even with larger-window models, a few things still happen:

Conversations keep growing

Tool outputs can be enormous

Important details become harder to keep salient

Costs rise with prompt size

Quality can degrade as the model has to attend over more and more material

This is part of why people have started talking about "context rot." The phrase is informal, but the idea is useful: as more material accumulates, not all of it remains equally helpful. Some information becomes stale, some becomes redundant, some becomes distracting, and some gets crowded out by sheer volume.

So the problem is not only one of storage. It is one of attention.

What actually accumulates in context?

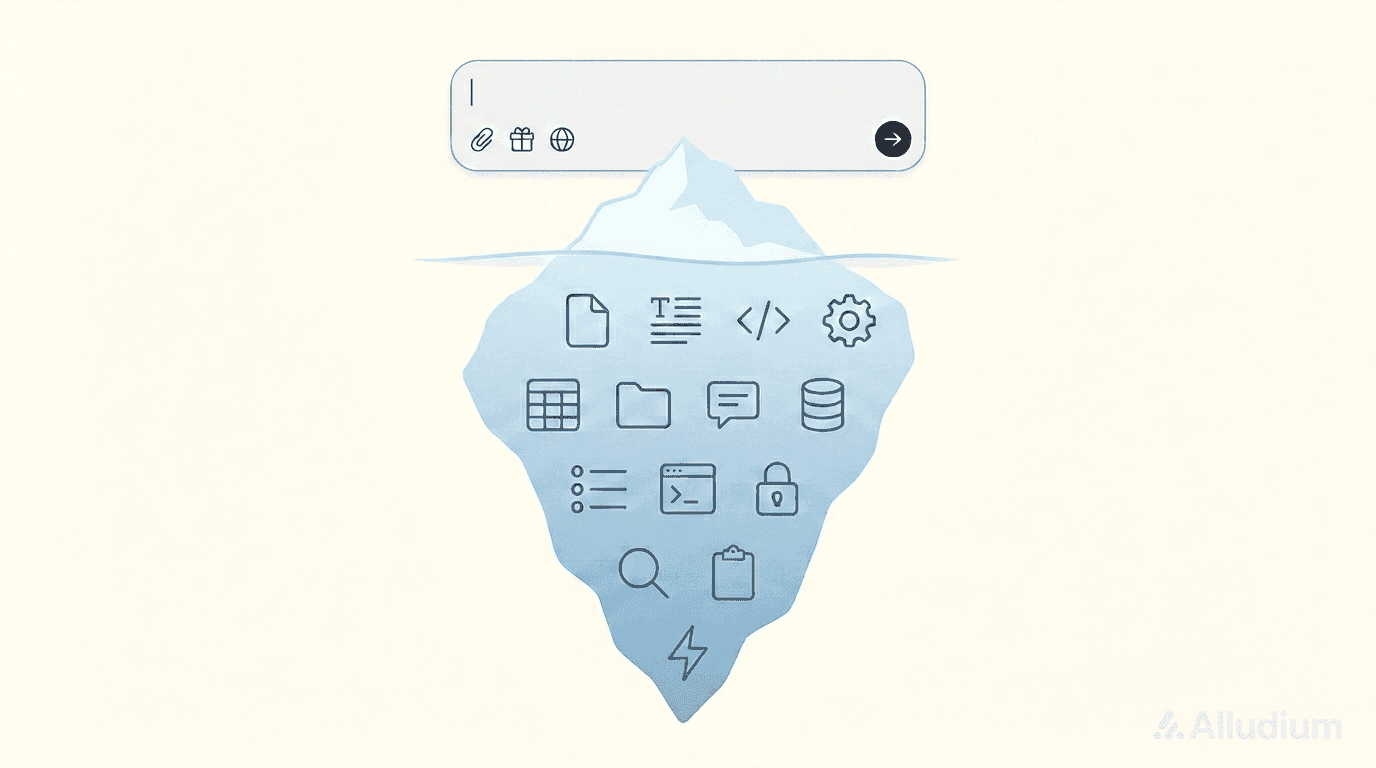

One reason this issue can be surprising is that users usually see only the conversation. The system sees much more.

In a production AI workflow, the context window may contain:

The instructions that define the agent's role

The conversation itself

Safety and policy text

Tool schemas and tool results

Retrieved files, documents, or web content

Intermediate reasoning artifacts or summaries

State carried forward from earlier turns

This is why context management becomes a real systems problem. It is not just about remembering the conversation. It is about deciding what belongs in the model's working set right now, what can be compressed, and what should stay outside the prompt until it is needed.

Our view: context should be managed, not merely enlarged

At Alludium, one of the clearest lessons from building real AI workflows has been that context cannot be treated as an ever-growing transcript.

If you do that, the system gets slower, more expensive, and less reliable over time.

Instead, we think of context management as an active discipline. The goal is to keep the model focused on the information that is most useful for the current step, while still preserving continuity across longer interactions.

At a high level, that means a few things:

Keeping the active prompt bounded

Preserving important recent context

Compacting or summarizing older material when appropriate

Storing full detail outside the prompt rather than forcing everything into it

Letting the system bring specific details back when they are needed

That is the heart of compaction: not deleting meaning, but reorganizing it so the model can stay effective.

What compaction really means

Compaction can sound mysterious, but the basic idea is approachable.

Imagine a desk while you are working on a project. If every note, email, document, and draft from the last three weeks stays open in front of you, the desk becomes unusable. A better approach is to keep the most relevant items in view, file away what is no longer active, and maintain a concise record of the decisions that still matter.

That is very close to how we think about model context.

The model does not need every historical detail in every turn. It needs the right details, in the right shape, at the right time.

So our system is designed to do things like:

Keep recent and relevant context close at hand

Carry forward the important decisions, constraints, and open threads

Avoid repeatedly sending large blocks of low-value text

Prefer structured, bounded context over unbounded accumulation

What we deliberately do not do is treat "longer prompt" as the same thing as "better memory."

Why this matters for users

Good context management is mostly invisible when it works.

Users experience it as a system that stays coherent for longer, wastes less effort, and is less likely to lose the thread halfway through a meaningful task. They do not need to understand every internal mechanism. They just notice that the agent feels steadier, more selective, and more useful.

That matters even more as AI systems move beyond simple chat and start interacting with tools, files, code, documents, and multi-step workflows. In those environments, context is not just a technical limit. It becomes part of the product experience.

The bigger shift

For a while, much of the conversation in AI focused on model quality in isolation: bigger models, better benchmarks, longer windows.

Those things matter. But building reliable AI products has pushed the industry toward a broader truth: context engineering matters too.

How information is selected, structured, compressed, and retrieved can have just as much impact on usefulness as the raw size of the model's context window.

That has certainly been true for us.

The more we have worked on long-running, tool-using AI systems, the clearer it has become that context management is not a nice-to-have layer around the model. It is a core part of making the model work well in the real world.

Context matters

A context window is often described as a technical specification. In practice, it is much closer to a design constraint on intelligence.

It shapes what the model can pay attention to, what it can keep track of, and how reliably it can continue a thread over time.

That is why we have spent so much time on compaction and context management. Not because the goal is to hide information from the model, but because the goal is to help the model stay oriented inside a bounded working set.

The future of useful AI systems will not come from larger windows alone. It will also come from better ways of deciding what belongs inside them.

Share

Related Post

Your AI team starts here

Alludium is live in public beta. Explore pre-built agents, connect your tools and build your own specialist agents in a shared workspace.