The person who knows the job has to build the agent

Hugh Fitzpatrick

Ready to get Started?

Transform your teams productivity with Alludium AI

No-code has always been sold as a convenience. Faster, cheaper, no developer needed. For traditional software, that argument was basically true, and basically weak. A real developer, given enough time, could always build it better. The underlying assumption was that requirements were stable and transferable. You could write a spec, hand it to an engineer, and get back something that worked. Non-technical people using no-code tools were taking a shortcut, and everyone knew it.

For AI agents, that logic flips entirely. No-code isn't the convenient option anymore. It's the only option that actually works. Understanding why changes how you think about building agents altogether.

Why agents are fundamentally different from apps

Agents aren't executing a fixed spec. They're making judgment calls on your behalf: what to look at, what to prioritise, what counts as a good answer, when to stop and ask. The quality of those calls depends entirely on context that's almost impossible to write down.

What does "urgent" mean in this particular inbox? What level of detail does this customer actually need right now? The knowledge that answers those questions doesn't live in a requirements doc. It lives in the head of whoever's been doing the job for two years, shaped by hundreds of situations they've never fully articulated to anyone, including themselves.

You can't extract that in a kickoff meeting. It has to be built iteratively, by the person who holds it, through direct interaction with the thing being built.

What happens when developers build agents for domain experts

The pattern plays out the same way almost every time. Developers build what they can spec. They test against inputs they can anticipate. The agent works in the demo. Then it hits the real world: the unusual request, the ambiguous phrasing, the situation that's technically in scope but contextually all wrong.

The domain expert looks at the output and knows immediately something's off, but can't easily explain why to someone who doesn't do the job. Feedback cycles slow down. Iteration gets expensive. The agent stays mediocre, or gets shelved before it ever becomes useful.

The problem isn't competence. It's structural. The knowledge required to make the agent good isn't accessible to the person doing the building, and no amount of better briefs or longer sprints changes that.

Why domain experts have to be in the builder's seat

When the person who understands the work can build the agent directly, something shifts. The feedback loop goes from weeks to minutes. They try something, see the output, adjust. They catch the edge cases intuitively because they've lived them a hundred times in the actual job. They know what "correct" looks and feels like, even when they can't fully articulate it, and they can tune toward it in real time.

This isn't really an argument about democratising tech access or lowering the barrier to entry. It's about putting the people with the most relevant knowledge in the builder's seat. That distinction matters more than it might first appear.

You're not building a replacement. You're building a team.

There's an obvious question sitting underneath all of this. If you're the one shaping the agent, teaching it how to handle your work, encoding your judgment into it, are you building something that eventually makes you redundant?

It's worth sitting with that rather than brushing it off. But the framing's off. You're building a digital team around yourself, not a replacement for yourself. It handles the parts of your job that drain you so you can focus on the parts that actually need you. The agent that monitors your deal flow and chases the ones that went quiet isn't replacing you. It's doing the work you never had bandwidth for, in roughly the way you'd have done it, while you're off doing something more valuable.

Every technology that's genuinely stuck around has had this shape. It takes something, but gives back more than it took. The more useful question isn't whether the agent can do your job. It's what you'll do with the time and headspace once it's handling the parts you've outgrown.

What this means for the platform underneath

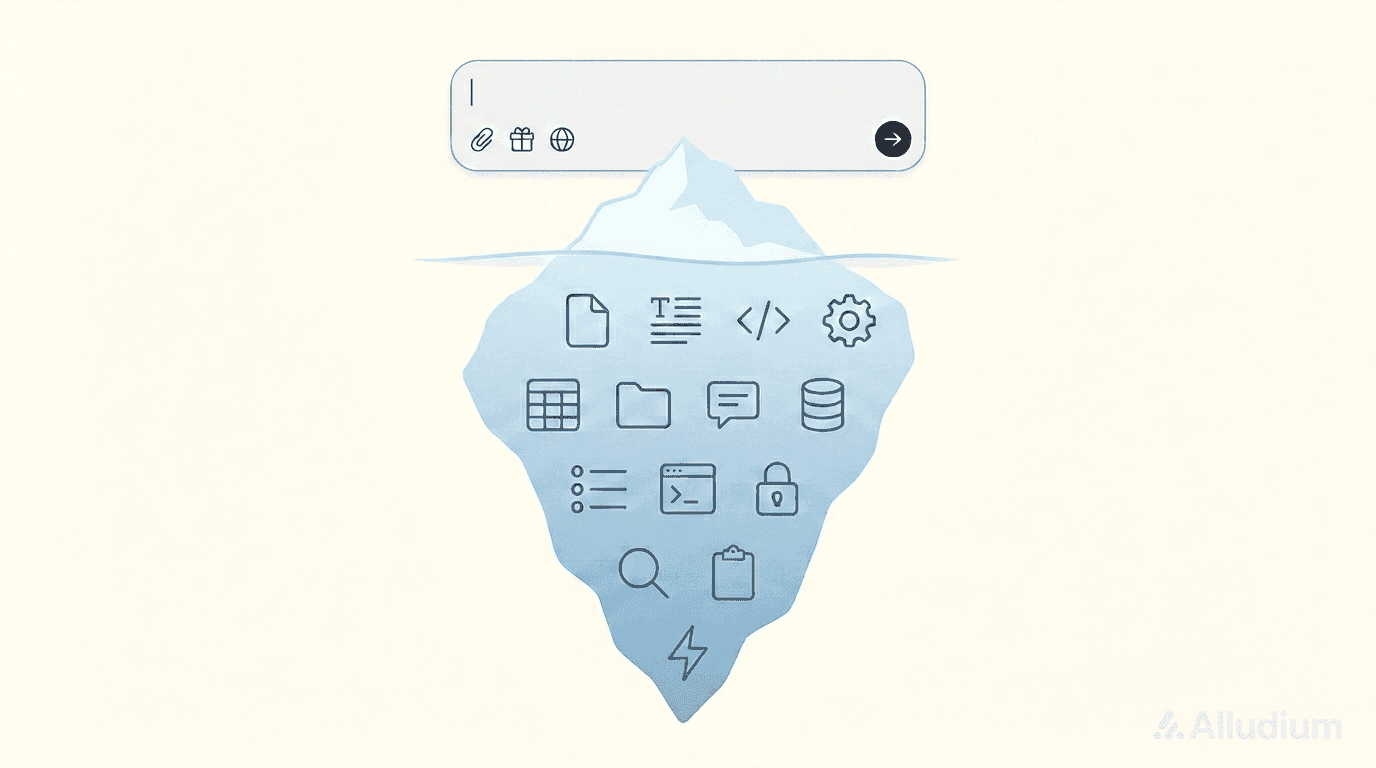

If the domain expert is the builder, the environment they build in matters enormously. They're not professional developers. They don't want to manage dependencies or debug API connections. The infrastructure needs to be solid and, ideally, invisible: reliable integrations, clear permissions, consistent behaviour across every agent they build.

The platform's job is to make the complex stuff disappear so the builder can focus on the one thing only they can do: shaping the judgment. Time spent troubleshooting the environment is time not spent on the work that makes the agent actually good.

There's an organisational dimension to this too. If every domain expert is building on their own stack, fragmentation comes fast. Different assumptions, no shared context, no way for agents to build on each other's work. A shared environment that's already set up correctly means anyone can start building immediately, on top of something stable.

The bigger shift

The question organisations need to start asking isn't which engineering team owns the agent strategy. It's which domain experts understand their workflows well enough, and have the right environment, to build agents themselves.

That's a different hiring question, a different training investment, and a different picture of what AI adoption actually looks like inside a company. The organisations that figure it out early will build agents that actually work. Not because they moved faster, but because the people who know the work are the ones shaping it.

Alludium is built for exactly this.

Share

Related Post

Your AI team starts here

Alludium is live in public beta. Explore pre-built agents, connect your tools and build your own specialist agents in a shared workspace.